Data sources: AmbitionBox India Salary Data (May 2026), Glassdoor India, LinkedIn India Job Postings (April 2026), NASSCOM GCC Report FY2025, LangChain GitHub Advisories, DSPy Documentation v3.1

TL;DR: Six technical skills now separate the ₹18 LPA ML engineer from the ₹45 LPA AI systems engineer in India — and none of them are prompt engineering. Indian GCCs (JPMorgan, Walmart Global Tech, Goldman Sachs, Adobe) and product companies (Flipkart, PhonePe, Zepto) are actively posting for these competencies. This guide gives you the working code, the salary benchmarks, and the learning path with ₹ costs for each one.

The Bifurcation Happening Right Now in Indian AI Hiring

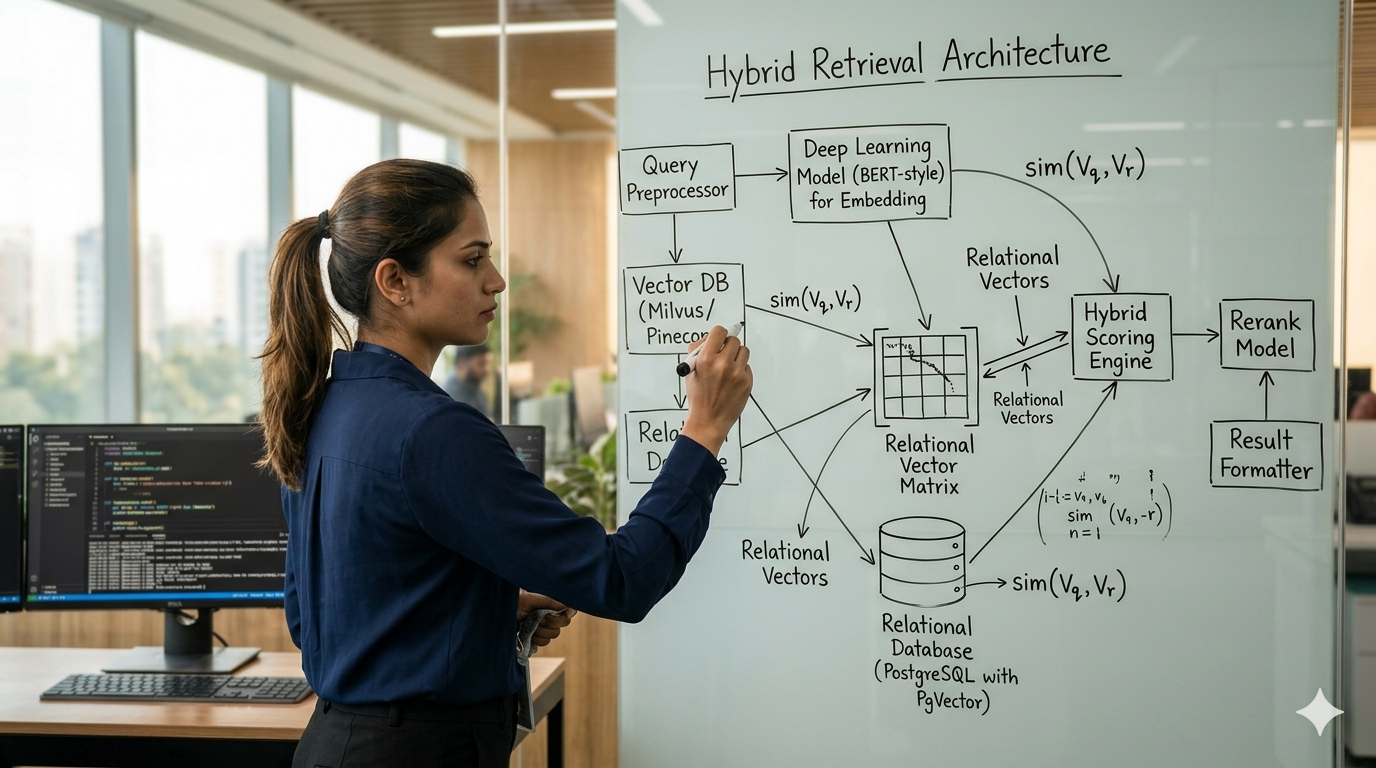

Two senior engineers at a Bengaluru GCC interview for the same AI platform role. Both have four years of ML experience. Both know Python, TensorFlow, and have built RAG pipelines.

The panel asks: “Our current retrieval system hallucinates on schema-bound financial queries. Design a solution that handles both unstructured policy documents and structured transaction relationships.”

Engineer A describes a vector database with better chunking. Confident delivery. Clean slides.

Engineer B sketches a hybrid retrieval architecture: vector search for unstructured breadth, a knowledge graph traversal layer for structured relationships, and entity linking as the bridge between them. Explains why pure vector search is architecturally blind to relational logic. Mentions idempotency in the agentic retry layer. No slides — just a whiteboard and clear reasoning.

Engineer B gets the offer at ₹42 LPA. Engineer A gets honest feedback: “Strong on execution, needs depth on architecture.”

This is the split NASSCOM’s GCC Report FY2025 describes when it notes that India’s 1,700+ GCCs are increasingly hiring for “AI systems design and integration” rather than “ML implementation.” The implementation layer is commoditised. The architecture layer is not.

Here are the six skills that define the architecture layer, what each pays in India, and the fastest credentialled path to each one.

The India Hiring Landscape: Who Is Actually Posting for These Skills

Before the technical breakdown, the market context.

LinkedIn India job postings (April 2026) show the following active role categories at Indian locations:

- JPMorgan India (Mumbai/Hyderabad): AI/ML Engineer — LLM Orchestration, LangGraph, agentic workflow design

- Walmart Global Tech (Bengaluru): Senior AI Engineer — RAG systems, knowledge graph integration, inference optimisation

- Goldman Sachs (Bengaluru): AI Platform Engineer — LLM security, prompt compilation, adversarial testing

- Adobe India (Noida/Bengaluru): ML Engineer — retrieval systems, ColBERT, multimodal search

- Flipkart (Bengaluru): AI Systems Engineer — real-time recommendation, hybrid retrieval, LLM serving at scale

- PhonePe (Bengaluru): Senior Backend Engineer — AI pipeline orchestration, state machine design, LLM guardrails

India Salary Benchmarks (AmbitionBox + Glassdoor India, May 2026):

| Experience Band | Role Title | Salary Range (LPA) | Premium Skills |

|---|---|---|---|

| 1–3 years | ML/AI Engineer | ₹12–22 | LangChain, basic RAG |

| 3–6 years | Senior AI Engineer | ₹25–45 | LangGraph, GraphRAG, DSPy |

| 6–10 years | Staff / Principal AI Engineer | ₹50–85 | Full-stack AI architecture, security |

| GCC specialist premium | Any level with verified agentic AI | +20–30% above band | Agentic systems, LLM security, inference optimisation |

The ₹30–60 LPA range — which is where this guide is targeted — maps to the 3–6 year band at GCCs and funded product companies. Every skill below is a documented requirement in that compensation tier.

Skill 1: Programmatic Prompt Optimisation (DSPy 3.1)

Why Manual Prompting Is an Engineering Dead End

For years, engineers tweaked prompts by hand — run it once, adjust the wording, run it again. This is not engineering; it is guesswork that breaks the moment you change the underlying model.

DSPy (Declarative Self-improving Python) eliminates manual prompt crafting entirely. It separates your program logic from its textual representation and treats LLM calls as differentiable operations optimisable against a metric.

The practical consequence: when you swap GPT-4o for Gemini 1.5 Pro, you do not rewrite your prompts. You recompile. DSPy 3.1.0, released January 2026, formalised this model-agnostic compilation as the production standard.

The MIPROv2 Detail Most Tutorials Skip

Basic DSPy tutorials cover simple optimisers. The production-grade tool is MIPROv2, which uses Bayesian Optimisation to simultaneously explore instruction variants and few-shot examples to find the global performance maximum.

Critical implementation detail: you must provide a distinct validation set separate from your training data. If you use training data for validation, you overfit the Bayesian search and degrade production performance.

import dspy

from dspy.teleprompt import MIPROv2

from dspy.datasets.gsm8k import gsm8k_metric

# 1. Configure the Language Model

lm = dspy.LM('openai/gpt-4o-2025-11-20', api_key='YOUR_KEY')

dspy.configure(lm=lm)

# 2. Define the Signature

class RAGSignature(dspy.Signature):

"""Answer questions based on retrieved context with precise reasoning."""

context = dspy.InputField(desc="relevant facts from knowledge base")

question = dspy.InputField()

reasoning = dspy.OutputField(desc="step-by-step logical deduction")

answer = dspy.OutputField(desc="short, fact-based answer")

# 3. Define the Module (required before compilation)

class RAGModule(dspy.Module):

def __init__(self):

self.retrieve = dspy.Retrieve(k=3)

self.generate = dspy.ChainOfThought(RAGSignature)

def forward(self, question):

context = self.retrieve(question).passages

return self.generate(context=context, question=question)

# 4. MIPROv2 Optimisation — 'auto=medium' runs ~50 Bayesian trials

optimizer = MIPROv2(

metric=gsm8k_metric,

auto="medium",

num_threads=8

)

# 5. Compile — trainset and valset must be distinct datasets

optimized_rag = optimizer.compile(

RAGModule(),

trainset=trainset, # your training examples

valset=valset, # separate validation set — do not reuse trainset

max_bootstrapped_demos=4,

max_labeled_demos=4

)DSPy vs Legacy LangChain

| Feature | LangChain (Legacy) | DSPy 3.1 (2026 Standard) |

|---|---|---|

| Prompting | Manual f-strings | Typed Signatures |

| Tuning | Trial and error | MIPROv2 Bayesian compilation |

| Model swap | Rewrite prompts | Recompile |

| Production reliability | Fragile | Metric-verified |

India learning path: DeepLearning.AI “Prompt Engineering with DSPy” short course (~₹2,800 for one month of access). DSPy official documentation (dspy.ai) is free and covers MIPROv2 with worked examples. Estimated time to working proficiency: 3–4 weeks.

Skill 2: Agentic Orchestration (LangGraph 1.0)

Why Linear Chains Cannot Build Production Agents

Legacy LangChain chains are directed acyclic graphs. They process input A to output B and discard all state. Real agentic tasks — “write code, run tests, fix the failures, repeat until it passes” — are inherently cyclic. They require structural memory across multiple turns.

LangGraph 1.0.6 (released late 2025) formalises the StateGraph primitive as the production standard for agentic workflows. Every node in the graph reads from and writes to a shared state dictionary, enabling loops, conditional branching, and multi-step recovery.

The Time-Travel Debugging Feature

LangGraph’s checkpointing system persists agent state at every step. If an agent fails at Step 4, you do not restart. You load the checkpoint from Step 3, edit the state dictionary directly, and resume. For production debugging of long-running agents, this is operationally critical.

Two Errors That Dominate Production Deployments

Error 1: GraphRecursionError

Recursion limit of 25 reached without hitting a stop condition.This is a safety guardrail, not a bug. Set recursion_limit explicitly in your runtime config:

config = {"recursion_limit": 100}

for output in app.stream(inputs, config=config):

passError 2: GraphLoadError in Deployment

Failed to load graph... No module named 'agent'Your directory structure is missing __init__.py files. LangGraph’s graph builder cannot resolve local imports unless folders are treated as Python packages. Add an empty __init__.py to every directory in your agent module tree.

India learning path: LangChain Academy (academy.langchain.com) — free, with a dedicated LangGraph course. DeepLearning.AI “AI Agents in LangGraph” short course (~₹2,800). The LangGraph GitHub repository contains production examples for Bengaluru-scale deployments. Estimated time to working proficiency: 4–5 weeks.

Skill 3: Structural Grounding (GraphRAG)

The Hard Accuracy Ceiling of Pure Vector Search

Vector databases work by semantic proximity — they retrieve chunks that are about the same thing. They are a bag of facts, not a reasoning engine.

Ask a vector system: “How does supply chain risk in Q3 compare to Q1, and which suppliers contributed to both?” It retrieves chunks containing “Q3” and “Q1” separately. It has no mechanism to compare them, because comparison requires traversing a relationship — and relationships are not stored in embedding space.

FalkorDB’s published benchmarks demonstrate this failure mode on schema-bound queries where pure vector retrieval performance collapses to near-zero while graph-augmented retrieval maintains high accuracy. (Source: FalkorDB GraphRAG benchmark blog, falkordb.com/blog)

GraphRAG solves this by building a knowledge graph from your documents — entities become nodes, relationships become edges — and routing relational queries through graph traversal rather than vector similarity.

The Winning Pattern: Hybrid Retrieval

Do not choose between vector and graph. The production pattern is both:

- Vector search for unstructured breadth: “Find all documents about pricing policy.”

- Graph traversal for structured depth: “Show me the relationship between pricing policy, region, and exception approvals.”

- Entity linking as the bridge: Run Named Entity Recognition (NER) on vector results to map retrieved text to nodes in your knowledge graph.

India learning path: Microsoft’s GraphRAG open-source library (github.com/microsoft/graphrag) — free. Neo4j Graph Academy (graphacademy.neo4j.com) offers a free “Graph Data Science” certification. For paid depth: Coursera’s “Knowledge Graphs” specialisation (~₹3,200/month). Estimated time to working proficiency: 5–6 weeks.

Skill 4: High-Fidelity Retrieval (ColBERTv2)

Why Single-Vector Embeddings Lose Meaning

Standard dense retrieval compresses an entire document into one vector. This is lossy compression. A 5,000-word legal contract becomes a single point in embedding space, and semantic nuance is lost.

ColBERTv2 (from Stanford NLP) retains token-level embeddings for every word in a document and performs late interaction at search time — matching individual query tokens against individual document tokens.

The practical consequence: ColBERT correctly distinguishes “not guilty” from “not guilty by reason of insanity” because it matches insanity in the query directly to insanity in the document. Dense retrieval conflates them into similar embedding neighbourhoods.

For Indian BFSI and legal tech applications — two of the fastest-growing GCC segments — this distinction is directly compliance-relevant.

Implementation via RAGatouille

Use RAGatouille, which wraps ColBERT for production use without requiring you to manage the token interaction layer manually.

Production pattern: Retrieve-then-Rerank. Use fast dense vectors to get the top 100 candidate documents, then use ColBERT to rerank the top 10.

from ragatouille import RAGPretrainedModel

# Load the pretrained ColBERTv2 model

RAG = RAGPretrainedModel.from_pretrained("colbert-ir/colbertv2.0")

# Index your documents (run once, persist the index)

RAG.index(

collection=your_document_list,

index_name="compliance_docs",

max_document_length=256,

split_documents=True

)

# Token-level search at query time

results = RAG.search(

query="Compliance requirements for Q3 audit disclosures",

k=5

)India learning path: Stanford CS224U (Natural Language Understanding) — free on YouTube. Hugging Face NLP course (huggingface.co/learn/nlp-course) — free and covers retrieval in depth. RAGatouille documentation (github.com/bclavie/RAGatouille) — free. Estimated time to working proficiency: 4 weeks with prior NLP background.

Skill 5: AI Security and Serialisation Defence

The LLM Security Landscape in 2026

AI security is no longer a perimeter problem. Vulnerabilities now exist at the code level inside the LLM application stack itself.

Indirect Prompt Injection is the dominant attack vector: a malicious instruction embedded in retrieved content that hijacks an agent’s behaviour. When your RAG pipeline retrieves an attacker-controlled document containing instruction-like text, that content enters the LLM context and can override system instructions.

LangChain Core has had multiple published CVEs addressing deserialisation vulnerabilities — where maliciously crafted lc keys in cached or logged data can force the application to instantiate arbitrary classes or expose environment variables. Check the LangChain Core security advisories page (github.com/langchain-ai/langchain/security/advisories) and ensure your langchain-core version is current.

The Mitigation Pattern

# Step 1: Verify your current version

# Run in terminal: pip show langchain-core

# Check against the latest version at: pypi.org/project/langchain-core/

# Step 2: Safe deserialisation — always restrict valid namespaces

from langchain_core.load import load

data = load(

user_input_json,

secrets_from_env=False,

valid_namespaces=["langchain_core.messages"] # whitelist only known safe classes

)

# Critical: many internal caching layers call load() automatically.

# Restricting valid_namespaces protects against automatic deserialisation

# even when you don't call load() directly in your own code.OWASP LLM Top 10: The Framework Indian GCCs Are Standardising On

The OWASP LLM Top 10 (2025 edition, owasp.org/www-project-top-10-for-large-language-model-applications) is becoming the security compliance framework for LLM applications at Indian GCCs operating under global security policies. Familiarise yourself with: LLM01 (Prompt Injection), LLM02 (Insecure Output Handling), and LLM06 (Sensitive Information Disclosure) — the three most commonly exploited in production RAG systems.

India learning path: OWASP LLM Top 10 documentation (free). SANS Institute “AI Security Fundamentals” (~₹28,000 for the full course, but the free introductory materials cover 80% of interview requirements). For a faster free path: snyk.io/learn/ai-security covers the LangChain-specific vulnerability classes with worked examples. Estimated time to interview-ready knowledge: 2–3 weeks.

Skill 6: Hardware-Aware Inference Optimisation

The Inference Cost Problem at India Scale

Python’s interpreter overhead becomes a genuine cost problem when you are serving LLM inference at scale. Indian product companies running GenAI features for 50–100 million users (Flipkart, Swiggy, PhonePe) are acutely aware of GPU cost per query.

torch.compile acts as a JIT (Just-In-Time) compiler. It converts eager PyTorch execution into fused, optimised kernels — reducing interpreter overhead and enabling GPU-level optimisation passes.

import torch

# Basic compilation — start here

model = torch.compile(model)

# Hardware-specific: if on consumer GPUs (RTX 3080/3090 series)

# force fp16 and disable max-autotune to avoid Triton kernel failures

model = torch.compile(

model,

mode="reduce-overhead", # safer on 30-series GPUs

dynamic=True

)

# If you see "Triton kernel compilation failed",

# switch to: torch.compile(model, backend="eager") as a fallbackThe 30-series GPU constraint: In practical testing, torch.compile with max-autotune mode fails on RTX 3090 hardware when fp8 quantisation is requested. Force fp16 and use reduce-overhead mode on any Ampere-generation GPU (30-series). Ada Lovelace (40-series) and Hopper (H100) handle max-autotune correctly.

Mojo: The Compute Kernel Language to Watch

Modular’s Mojo language offers C++-level performance with Python-compatible syntax, targeted specifically at AI compute workloads. It is not a Python replacement — it is a language for writing the custom kernels that handle your hottest inference paths when PyTorch’s built-in operations are too slow.

The current 2026 production pattern: keep your orchestration layer in Python, identify your 2–3 most compute-intensive operations via profiling (torch.profiler), and rewrite those specific operations as Mojo kernels. Indian ML infrastructure teams at Flipkart and Walmart Global Tech are beginning to adopt this pattern for custom attention implementations.

Do not rewrite your entire application in Mojo. Profile first, rewrite the bottleneck, measure the gain.

India learning path: PyTorch torch.compile tutorial (pytorch.org/tutorials/intermediate/torch_compile_tutorial.html) — free. Modular Mojo documentation (docs.modular.com/mojo) — free, with a browser-based playground. fast.ai “Practical Deep Learning for Coders” (fast.ai) — free, covers hardware-aware training and inference depth. Estimated time to working proficiency: 4–6 weeks for torch.compile; Mojo is an ongoing investment.

The Full Learning Path Summary

| Skill | Free Path | Paid Path (₹) | Time to Proficiency |

|---|---|---|---|

| DSPy 3.1 / MIPROv2 | dspy.ai documentation | DeepLearning.AI short course (~₹2,800) | 3–4 weeks |

| LangGraph 1.0 | LangChain Academy (free) | DeepLearning.AI “AI Agents in LangGraph” (~₹2,800) | 4–5 weeks |

| GraphRAG | Microsoft GraphRAG GitHub (free) | Neo4j Graph Academy cert + Coursera (~₹3,200/month) | 5–6 weeks |

| ColBERTv2 | Hugging Face NLP course (free) | Stanford CS224U YouTube (free) | 4 weeks |

| LLM Security | OWASP LLM Top 10 (free), snyk.io/learn | SANS AI Security (~₹28,000 full) | 2–3 weeks |

| torch.compile / Mojo | PyTorch docs + fast.ai (free) | GPU cloud credits for practice (~₹2,000–5,000) | 4–6 weeks |

Total investment for the full stack: ₹10,000–15,000 in paid materials, plus 6–8 months of dedicated learning. The salary delta between a ₹18 LPA implementation engineer and a ₹42 LPA AI systems engineer is approximately ₹24 LPA — making this the highest ROI technical investment available in Indian IT in 2026.

The Certification That Wraps This Stack

No single certification covers all six skills, but the Google Professional Machine Learning Engineer certification (cloud.google.com/certification/machine-learning-engineer) is the closest recognised credential in Indian GCC hiring. It covers MLOps, model serving, and system design at scale — the architectural thinking layer that underpins all six skills above. Exam cost: ~₹15,000. Preparation time: 8–12 weeks with prior ML experience.

For the security-specific skill, the GIAC AI Security Fundamentals (GAIS) is emerging as the benchmark for GCC security roles, though it is expensive (~₹65,000 full package). The OWASP LLM Top 10 self-study remains the free equivalent for interview preparation.

Key Takeaways

- DSPy 3.1 replaces manual prompting with mathematical compilation. The

RAGModuleclass must be defined before you calloptimizer.compile(). - LangGraph 1.0 is mandatory for building production agentic loops. Set

recursion_limitexplicitly and add__init__.pyto all agent module directories. - GraphRAG solves the structural accuracy ceiling of pure vector search. The winning production pattern is hybrid: vector for breadth, graph traversal for relational depth.

- ColBERTv2 via RAGatouille provides token-level search precision for BFSI and legal applications where semantic nuance is compliance-critical.

- LLM Security is now a code-level dependency. Check LangChain Core security advisories, restrict deserialisation namespaces, and know the OWASP LLM Top 10.

- torch.compile cuts inference costs at scale. On 30-series GPUs, use

reduce-overheadmode with fp16 — notmax-autotunewith fp8. - The India salary premium for engineers who combine these six skills: ₹25–45 LPA at GCCs and funded product companies versus ₹12–18 LPA for implementation-only roles.

References

AmbitionBox India Salary Data (May 2026)

ambitionbox.com — AI/ML Engineer salary ranges by experience band and company

Glassdoor India — ML/AI Engineer Compensation

glassdoor.co.in — Bengaluru, Hyderabad, and Pune compensation data

NASSCOM GCC Report FY2025

nasscom.in/research — India GCC headcount, skill demand, and compensation trends

LinkedIn India Job Postings — April 2026

linkedin.com/jobs — “LangGraph”, “GraphRAG”, “DSPy” keyword searches, India location filter

FalkorDB GraphRAG Benchmark

falkordb.com/blog — Vector vs. GraphRAG accuracy on schema-bound queries

LangChain Core Security Advisories

github.com/langchain-ai/langchain/security/advisories — CVE and vulnerability disclosure history

OWASP LLM Top 10 (2025)

owasp.org/www-project-top-10-for-large-language-model-applications

DSPy Documentation v3.1

dspy.ai — MIPROv2 optimiser, compilation pipeline, production setup

PyTorch torch.compile Tutorial

pytorch.org/tutorials/intermediate/torch_compile_tutorial.html

Sathish Kumar covers AI engineering and career strategy for the Indian tech market at SkillUpgradeHub. This article is updated bi-annually to reflect current GCC hiring requirements and tool versions.